Machine Learning: LLM/VLM Training and Engineering by Stas Bekman

My name is Stas Bekman and I'm a software engineer who enjoys tinkering, building reliable systems and who excells at identifying and solving problems, and writes about it.

I have been writing software since 1994.

I have worked in multiple domains, for many years taught at major tech conferences and user groups, published several books, and currently I specialize in training large language models (LLM) (and multi-modal) in the Pytorch ecosystem.

LLM/VLM Training

I have been working on various Natural language processing tasks - from ML translation to generative models. But the main direction is training Large Language Models (LLM) and Visual Language Models (VLM).

Performance Optimization and Problem Solving

While I can build a whole system from the ground up, I have a knack, intuition and an extended experience dealing with a variety of problems in software. In particular, I'm good at identifying and sorting out performance issues, such as memory leaks, speed bottlenecks, but also various other types of bugs in systems (in particular difficult bugs).

Most of the time ML users don't realize that they waste money and resources because their programs are inefficient and some hardware components cause bottlenecks. Surely you can always throw more money at your project to buy/rent more powerful hardware and waste more electricity and time, but very often you can instead make your software lean and efficient.

I maintain Machine Learning Engineering Open Book, as well as The Art of Debugging, where I share fast successful debugging methodologies.

Current Work

Currently I work at Snowflake AI Research developing efficient post-training software in a great team of Deepspeed creators.

Occasionally I do Machine Learning consulting. Please email me at stas@stason.org if you think I can be of help to your company. For the scope of my expertise please see Machine Learning Engineering Open Book and The Art of Debugging - the latter is still an early WIP.

Earlier I was dealing with building a distributed training framework, trained and infered Retrieval Augmented Generation (RAG) LLMs at Contextual.AI.

Prior to that I was at Hugging Face (2020-2023). You can see my contributions to the HuggingFace Transformers library. I'm involved in many other internal and external projects like Deepspeed, PyTorch and others.

One of the major accomplishments was the massive training on 384 A100 GPUs of the 176B parameters BLOOM model (similar to GPT-3), which as of 2022 was the Largest Open-Access Multilingual language model. I was the lead engineer on this project.

In 2023 with my team I trained IDEFICS-80b multimodal model on 512 A100 GPUs.

Even earlier around 2020 I helped develop fastai.

Papers

- Arctic Long Sequence Training: Scalable And Efficient Training For Multi-Million Token Sequences

- Universal Checkpointing: Efficient and Flexible Checkpointing for Large Scale Distributed Training

- The Case for Co-Designing Model Architectures with Hardware

- OBELICS: An Open Web-Scale Filtered Dataset of Interleaved Image-Text Documents

- What Language Model to Train if You Have One Million GPU Hours?

- Datasets: A Community Library for Natural Language Processing

- BLOOM: A 176B-Parameter Open-Access Multilingual Language Model

Awards

I have received a PyTorch community award in 2023.

Contact

I live on Vancouver Island, BC, Canada.

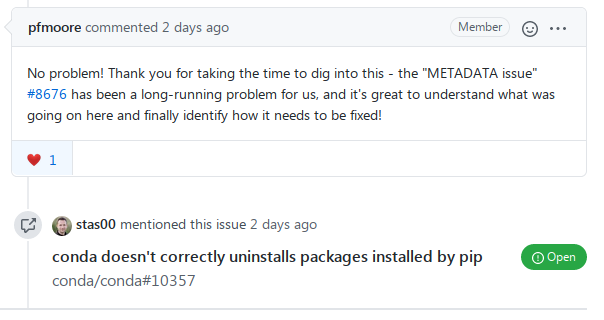

Some samples of my problem solving in the open source projects